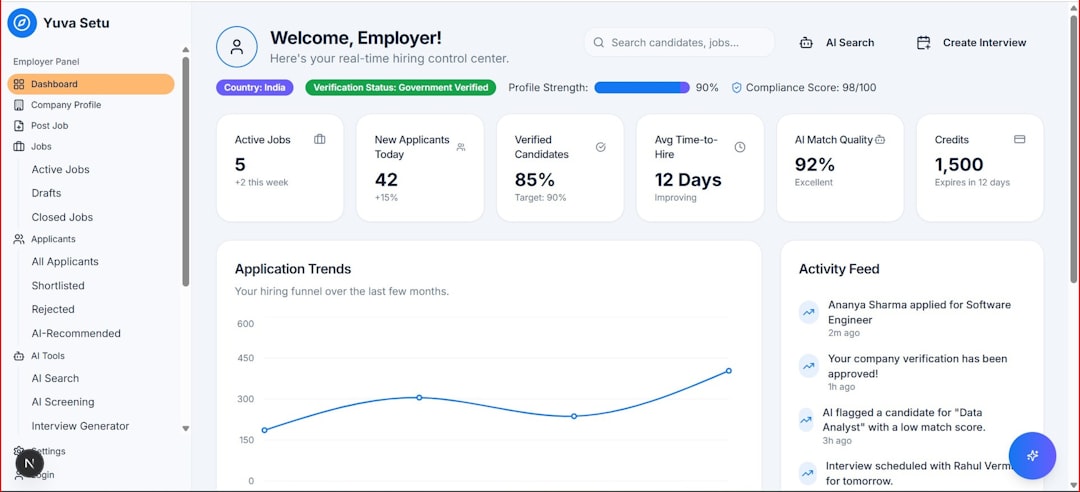

Artificial intelligence is no longer confined to static dashboards or batch-processed results. Today’s users expect systems that speak, type, and respond in real time, mirroring human interaction as closely as possible. This demand has fueled the rise of AI response streaming applications—platforms and frameworks that allow developers to build dynamic, interactive AI interfaces with continuous output. Tools like Streamlit and similar frameworks are reshaping how developers prototype and deploy AI-powered applications, making real-time intelligence more accessible than ever.

TLDR: AI response streaming apps enable real-time AI interactions by delivering outputs token-by-token instead of waiting for complete responses. Platforms like Streamlit simplify the creation of interactive AI interfaces without heavy frontend development. These tools accelerate prototyping, enhance user engagement, and open new opportunities for chatbots, code assistants, data tools, and more. As streaming becomes standard, real-time AI UI design is emerging as a core development skill.

The Shift Toward Real-Time AI Interfaces

Traditional AI applications often followed a simple pattern: submit a query, wait for processing, receive a fully completed result. While functional, this approach breaks the illusion of conversation and immediacy. Modern users, trained by live chat apps and instant search results, expect ongoing feedback.

Response streaming changes that wait-and-see paradigm. Instead of returning a completed block of text or data, the AI sends incremental chunks as they are generated. In language models, this often means token-by-token text generation. In data apps, it could involve progressive chart rendering or incremental prediction updates.

This shift makes applications feel:

- More interactive

- Faster (even if total response time is similar)

- More human-like

- More transparent in reasoning

The user is no longer waiting in silence. They see the AI “thinking.”

What Are AI Response Streaming Apps?

AI response streaming apps are development environments or frameworks that enable:

- Real-time streaming of AI-generated outputs

- Reactive user interfaces

- Quick iteration and prototyping

- Tight integration with AI APIs and models

Streamlit is a prominent example. Originally designed for data science dashboards, Streamlit evolved into a powerful tool for building AI-powered web apps with minimal frontend expertise. Developers can write mostly Python code and deploy interactive AI tools rapidly.

But Streamlit is just one piece of a growing ecosystem. Other tools and frameworks provide similar capabilities, often adding enhanced state management, collaborative features, or advanced UI control.

Why Streaming Matters in AI Applications

Streaming isn’t just a cosmetic enhancement—it meaningfully improves user experience and system perception.

1. Perceived Performance Improvement

Even if a full response takes 10 seconds to generate, streaming the first tokens within one second dramatically improves perceived performance. Users feel the system is responsive and active.

2. Improved Engagement

Watching a response unfold creates a psychological hook. Similar to live typing indicators in messaging apps, streaming builds anticipation and involvement.

3. Better Error Handling

If something fails mid-response, the user sees partial output rather than a blank failure screen. Developers can also insert fallback logic dynamically.

4. Enhanced Transparency

Streaming can support visible reasoning steps, intermediate calculations, or progressive refinement—especially useful in AI debugging and educational tools.

How Streamlit Enables Real-Time AI Interfaces

Streamlit’s appeal lies in its simplicity. Developers write Python scripts that define how the interface behaves, and the framework automatically renders a web app.

When combined with AI APIs that support streaming, Streamlit can:

- Display text gradually as it’s generated

- Update UI elements dynamically

- Maintain conversational session history

- Trigger reruns of scripts in response to user input

Instead of managing complex frontend technologies like React or WebSockets manually, developers focus on logic and model interaction.

This drastically lowers the barrier to entry. A solo developer or data scientist can build an AI chatbot, summarization tool, or document analyzer in hours rather than weeks.

Common Use Cases for Streaming AI Interfaces

Real-time AI interfaces are now powering a wide range of applications:

AI Chatbots and Assistants

Streaming enhances the conversational feel of AI assistants. Whether serving customers or internal teams, a gradual response feels natural and interactive.

Code Generation Tools

Developers benefit from watching code appear incrementally. This allows them to interrupt, refine prompts, or anticipate structure before the full output is complete.

Document Analysis and Summarization

Large documents can be processed section-by-section, with insights streamed progressively instead of delivered in a single block.

Data Exploration Apps

Analytics platforms can stream computed results, progressively refine visualizations, and adapt interactively to new filters or inputs.

Educational Platforms

AI tutors that reveal reasoning steps gradually create a more immersive and understandable learning experience.

Core Components of a Streaming AI App

Building a high-quality streaming interface typically involves several moving parts:

- Streaming-capable AI model (e.g., token-based output)

- Frontend rendering loop that updates display incrementally

- Session state management for preserving conversation context

- Error handling logic for partial outputs

- Backend infrastructure capable of handling concurrency

While frameworks abstract much of this complexity, understanding these elements helps developers design smoother experiences.

Design Challenges in Real-Time AI UIs

Real-time interfaces introduce new design considerations.

Managing User Expectations

If streaming is too slow, users may perceive the system as lagging. Designers must balance chunk sizes and refresh frequency carefully.

Interruptibility

Many advanced AI apps allow users to stop a generation mid-stream and modify their query. Supporting this interaction requires additional control logic.

State Synchronization

Maintaining smooth session continuity—especially in multi-user environments—demands thoughtful handling of memory and context windows.

Security and Cost Management

Streaming large responses continuously can increase token usage and API costs. Developers need monitoring mechanisms to prevent runaway generation.

Beyond Streamlit: Expanding Ecosystems

While Streamlit is popular, the ecosystem continues to expand. Other AI-focused development tools now offer:

- Advanced UI customization

- Collaborative real-time editing

- Integrated model deployment

- Built-in authentication systems

- Scalable cloud infrastructure

Some frameworks lean toward low-code workflows, enabling product teams and analysts to participate in AI app creation without deep programming expertise.

This democratization parallels earlier no-code and low-code revolutions. AI is becoming less of a backend utility and more of an interactive layer woven directly into applications.

The Role of WebSockets and Event-Driven Architectures

At a technical level, many streaming apps rely on persistent communication protocols such as WebSockets or server-sent events (SSE). These technologies enable:

- Bidirectional communication

- Low-latency updates

- Continuous server-client synchronization

Event-driven architecture ensures that as soon as the AI generates new output, it is pushed to the interface immediately. This infrastructure layer is critical for creating seamless, responsive experiences.

Best Practices for Building Streaming AI Interfaces

If you’re building a streaming AI app, keep these principles in mind:

- Stream early, stream small: Deliver initial output quickly, even if partial.

- Show typing indicators: Visual cues reduce uncertainty.

- Enable cancellation: Give users control over long generations.

- Optimize token usage: Stream efficiently to manage cost.

- Test responsiveness extensively: Network variability matters.

UX refinement is just as important as model accuracy in these systems.

The Future of Real-Time AI Interfaces

As AI models grow more sophisticated, streaming will likely become the default rather than the exception. We may see:

- Multimodal streaming (text, images, audio simultaneously)

- Live AI-generated visualizations

- Adaptive interfaces that reshape in response to AI output

- Collaborative multi-user AI sessions

Voice assistants already rely on streaming audio generation. Image generation tools are beginning to preview intermediate outputs. Soon, most AI applications will rely on incremental visibility to enhance trust and usability.

In the long run, the distinction between “application” and “AI” may blur. Interfaces will stream insight continuously, embedded directly into workflows and communication platforms.

Conclusion

AI response streaming apps like Streamlit are redefining how interactive AI systems are built. By enabling token-level or incremental output delivery, they transform passive AI tools into engaging, conversational platforms. Developers benefit from faster prototyping cycles, reduced frontend complexity, and more intuitive experimentation environments.

Most importantly, users benefit from experiences that feel alive. They no longer stare at static loading screens—they watch intelligence unfold in real time. As expectations for immediacy continue to rise, the ability to build responsive, streaming AI interfaces will become an essential skill in modern software development.

The future of AI isn’t just powerful—it’s interactive, dynamic, and streaming.