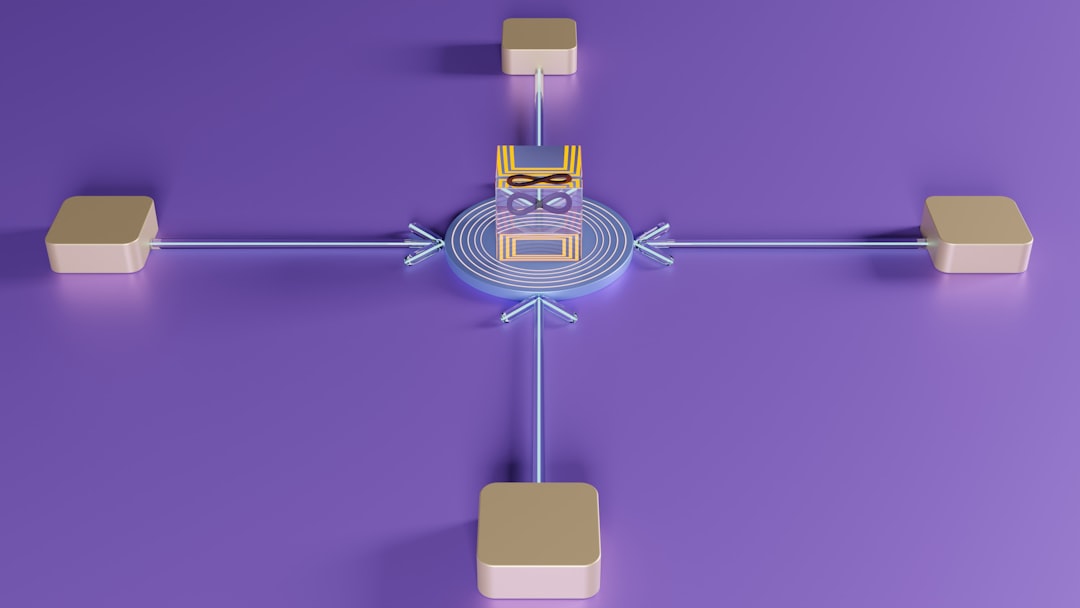

Imagine you have a team of super-smart robots. Each robot is great at something different. One writes beautifully. One summarizes fast. One analyzes numbers. One creates images. But here is the problem. They do not talk to each other very well. That is where prompt routing platforms like LangChain come in. They help you manage all these robots, also called models, in one smooth workflow.

TLDR: Prompt routing platforms like LangChain help you manage multiple AI models in one system. They decide which model should handle which task. They connect tools, data, and logic into clean workflows. This makes building AI apps easier, smarter, and more reliable.

Let’s break it down in a fun and simple way.

What Is Prompt Routing?

Think of prompt routing like a smart traffic controller. Cars are your prompts. Roads lead to different AI models. The controller decides where each car should go.

Not every question needs the same AI model. For example:

- A creative writing task might go to a large language model.

- A math-heavy task might go to a smaller, logic-focused model.

- An image generation request goes to an image model.

- A data lookup request goes to a database tool.

Prompt routing platforms automate that decision. They send each request to the best possible tool.

Why Do We Need Multi-Model Workflows?

AI is no longer just one big brain.

Today, companies use:

- Different language models

- Open-source and paid APIs

- Internal company data systems

- Search tools

- Image and audio generators

Each model has strengths and weaknesses. Some are cheap but simple. Others are powerful but expensive. Some are fast. Others are slow but precise.

If you send every task to one giant model, you:

- Waste money

- Lose speed

- Reduce reliability

Multi-model workflows solve this. They help you mix and match models like Lego blocks.

Enter LangChain

LangChain is a framework. It helps developers build applications using language models. But it does more than just call an API.

It allows you to:

- Chain prompts together

- Add tools like search and databases

- Create conditional logic

- Route prompts dynamically

- Store and use memory

Instead of writing messy code for each model interaction, you build structured workflows.

It is like building train tracks instead of pushing the train manually every time.

How Prompt Routing Actually Works

Let’s look at a simple example.

Imagine you build an AI assistant for an online store. The assistant receives different types of user questions:

- “Where is my order?”

- “Write a product description for red sneakers.”

- “What is the return policy?”

- “Show me similar products.”

Each of these needs a different action.

With prompt routing, the system:

- Analyzes the incoming query.

- Classifies its intent.

- Routes it to the correct model or tool.

- Collects the result.

- Returns a clean answer to the user.

You can build this routing logic using:

- Rule-based routing (if X, send to Y)

- LLM-based routing (an AI decides where to send it)

- Hybrid systems (rules + AI combined)

This makes your assistant feel intelligent. Because under the hood, it is choosing the right brain for the job.

Chains: The Building Blocks

LangChain is famous for something called chains.

A chain is a sequence of steps.

For example:

- Take user input.

- Summarize it.

- Search database with summary.

- Send results to language model.

- Generate final answer.

Each step connects to the next. Like dominoes.

This is powerful. Because real AI apps are rarely one-step tasks.

They usually involve:

- Thinking

- Looking up information

- Transforming data

- Reasoning

- Generating output

Chains organize all of that.

Agents: Smart Decision Makers

If chains are train tracks, agents are self-driving cars.

Agents decide what to do next.

They can:

- Choose which tool to use

- Call external APIs

- Search the web

- Retry if something fails

An agent does not follow a fixed path. It thinks step by step.

In multi-model workflows, agents are often used for dynamic routing. They inspect the problem. Then decide which model or tool fits best.

This creates flexible systems. Not rigid scripts.

Cost Control and Optimization

Here is something practical.

Large AI models can be expensive. Very expensive.

Prompt routing helps reduce cost by:

- Sending simple queries to smaller models

- Using larger models only when necessary

- Reducing repeated API calls

- Caching previous responses

For example:

- “What is 2 + 2?” does not need the most advanced reasoning model.

- A 2,000-word legal draft might.

Routing platforms help automate these decisions. So your system stays efficient.

Adding Memory to Workflows

Conversations feel natural because they have memory.

If you say:

“Book a flight to Paris.”

And then:

“Make it next Friday.”

The system needs context.

LangChain allows you to plug in memory systems.

Memory can be:

- Short-term conversation history

- Long-term user preferences

- Stored embeddings in a vector database

When combined with prompt routing, memory becomes powerful.

The system can:

- Route based on user history

- Personalize model responses

- Retrieve relevant past data

This creates smarter applications.

Retrieval-Augmented Generation (RAG)

One huge use case is RAG.

RAG means the model does not just guess. It retrieves real data first.

The workflow looks like this:

- User asks a question.

- System searches a document database.

- Relevant chunks are retrieved.

- Model generates an answer using those chunks.

Prompt routing platforms manage this flow.

Instead of one single prompt, you now have a multi-step, multi-tool system.

This reduces hallucinations. And improves accuracy.

Real-World Use Cases

Let’s look at where this matters.

1. Customer Support Bots

- Route billing questions to database systems.

- Route emotional complaints to empathetic language models.

- Escalate complex issues to humans.

2. Content Creation Platforms

- Use a creative model for storytelling.

- Use an SEO analysis tool for optimization.

- Use another model to summarize long content.

3. Research Assistants

- Search academic papers.

- Summarize findings.

- Compare results across documents.

4. Coding Assistants

- Use one model for code generation.

- Use another for debugging.

- Run test environments automatically.

All powered by routing and workflow management.

Why This Is the Future

AI is becoming modular.

Instead of one giant AI doing everything, the future looks like:

- Specialized models

- Connected tools

- Smart routing systems

This is similar to how modern apps work.

Apps do not run on one giant server anymore. They use microservices.

Prompt routing platforms are like microservices for AI.

They:

- Increase flexibility

- Improve scalability

- Reduce risk

- Allow experimentation

Simple Mental Model

If this still feels complex, remember this:

Models are workers.

Prompts are tasks.

Routing platforms are managers.

A good manager knows:

- Who is best at which task

- How to assign work efficiently

- How to combine results

- How to control costs

That is exactly what LangChain and similar platforms do.

Final Thoughts

Prompt routing platforms like LangChain are not just developer tools. They are orchestration engines.

They turn scattered AI models into coordinated systems.

They make multi-step reasoning possible.

They allow apps to be smarter without being wasteful.

And most importantly, they make building AI applications simpler.

Instead of juggling APIs manually, developers design workflows.

Instead of guessing which model to use, systems decide automatically.

The result?

Faster apps. Cheaper operations. Smarter results.

In a world filled with powerful AI models, the real magic is not just intelligence.

It is coordination.

And that is exactly what prompt routing platforms are built to do.