As organizations rapidly adopt large language models (LLMs) for customer support, software development, research, and internal automation, a new challenge has emerged: how to systematically manage, track, and replay thousands of AI interactions. Ad-hoc prompting through chat interfaces may work for experimentation, but production environments demand structure, traceability, and reproducibility. This is where AI session management platforms such as PromptLayer play a transformative role, acting as observability and orchestration layers for LLM-powered systems.

TLDR: AI session management platforms like PromptLayer help teams log, organize, monitor, and replay large language model interactions in production environments. They provide visibility into prompts, responses, performance metrics, and version changes, enabling debugging, optimization, and compliance. By centralizing AI session data, these platforms support collaboration, governance, and experimentation at scale. As AI adoption grows, structured session management becomes essential rather than optional.

The Growing Complexity of LLM Workflows

Early experimentation with LLMs often begins in simple chat interfaces or embedded API calls. However, once companies integrate models into applications, complexity increases significantly. Each interaction may involve:

- Dynamic prompts generated from user input

- Multiple chained model calls

- External tool integrations

- Memory handling across sessions

- Role-based user inputs and permissions

Without structured logging, teams struggle to answer basic questions:

- Which prompt version produced this output?

- Why did performance drop last week?

- What caused this hallucinated response?

- How can we reproduce this error?

Traditional logging systems capture API calls but fail to provide the semantic context needed to understand prompt evolution and AI behavior. Dedicated AI session management platforms fill that gap.

What Is an AI Session Management Platform?

An AI session management platform is a specialized infrastructure layer designed to log, track, version, and replay LLM interactions. Rather than treating each prompt-response exchange as an isolated event, these platforms treat them as structured sessions with metadata, dependencies, and performance signals.

Core capabilities often include:

- Prompt logging: Capturing every prompt sent to an LLM

- Response storage: Recording outputs along with timestamps

- Version control: Tracking prompt changes over time

- Session replay: Reconstructing multi-step conversations

- Performance analytics: Monitoring latency, token usage, and cost

- Tagging and filtering: Organizing interactions by team or feature

Platforms like PromptLayer focus on observability and traceability, allowing teams to treat prompts as first-class assets similar to source code.

Why Session Logging Is Critical for Production AI

1. Debugging and Root Cause Analysis

When AI outputs produce unexpected results, teams must diagnose whether the issue originated from the prompt, model choice, temperature settings, or user inputs. A session management platform allows engineers to rewind and inspect the exact sequence of calls that led to the result.

This replay capability eliminates guesswork and shortens debugging cycles.

2. Prompt Version Control

Prompts evolve over time as teams refine instructions. Even slight wording changes can significantly affect output quality. Without version tracking, teams risk losing high-performing prompts or introducing regressions.

Platforms like PromptLayer enable side-by-side comparison of prompt iterations, making optimization measurable rather than anecdotal.

3. Performance and Cost Optimization

LLM usage incurs token-based costs. Monitoring token consumption across sessions provides insights into:

- Overly verbose prompts

- Redundant API calls

- High-latency workflows

- Inefficient model selection

With this visibility, organizations can reduce operational expenses while maintaining output quality.

4. Compliance and Auditability

In regulated industries such as healthcare, finance, and legal services, audit trails are mandatory. Session logs provide documentation of what the AI system generated and what input triggered it, supporting governance requirements.

Key Platforms in the AI Session Management Space

Several tools are emerging to solve session tracking and observability challenges. Below is a comparison of notable platforms, including PromptLayer.

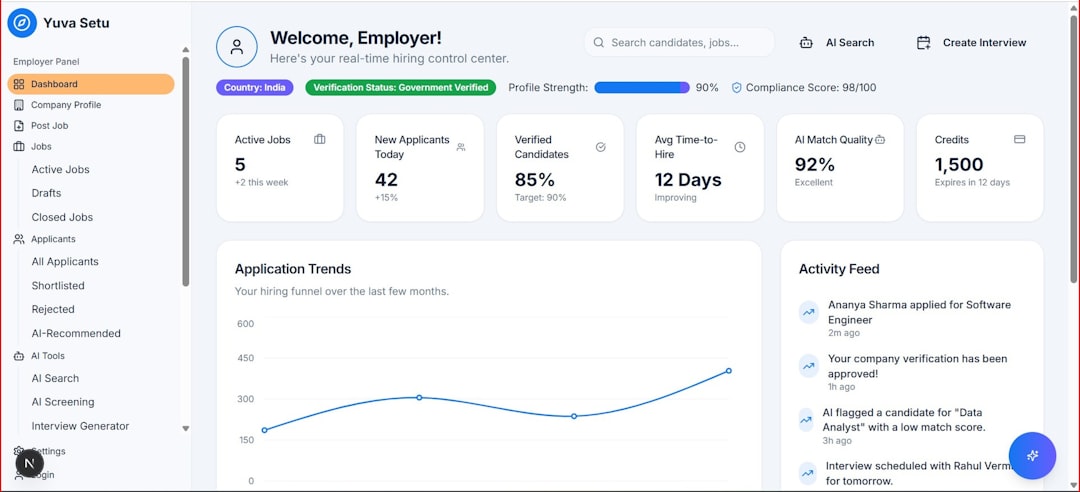

| Platform | Primary Focus | Session Replay | Prompt Versioning | Analytics Dashboard | Best For |

|---|---|---|---|---|---|

| PromptLayer | Prompt logging and observability | Yes | Yes | Yes | Teams optimizing prompt workflows |

| LangSmith | LLM app debugging and tracing | Yes | Partial | Advanced tracing | Developers building complex chains |

| Helicone | LLM API monitoring and analytics | Limited | No | Yes | Cost and usage monitoring |

| Humanloop | Prompt engineering lifecycle management | Yes | Yes | Yes | Teams collaborating on prompt development |

While offerings vary, all aim to increase visibility into AI system behavior. PromptLayer is particularly known for its streamlined approach to prompt history and request tracing.

How Session Replay Improves AI Reliability

Session replay allows teams to reproduce exact LLM calls with original inputs and configurations. This feature is especially valuable in complex pipelines where multiple prompts are chained together.

Benefits of session replay include:

- Experiment replication: Developers can compare outputs across models.

- Regression testing: Teams can test new prompt versions against historical inputs.

- Incident investigation: Product managers can trace unexpected behaviors.

- Model benchmarking: Replay enables consistent cross-model evaluation.

This structured reproducibility mirrors practices in traditional software engineering, bringing discipline to AI-driven systems.

Organizing LLM Interactions at Scale

As AI adoption expands across departments, interaction volumes multiply. Marketing, customer service, engineering, and operations may all deploy LLM-backed tools simultaneously. Without centralized session management, siloed experimentation leads to:

- Duplicated prompts

- Inconsistent writing styles

- Untracked prompt drift

- Security vulnerabilities

Session management platforms support cross-functional collaboration by enabling:

- Shared prompt libraries

- Access control policies

- Team-level tagging systems

- Standardized prompt templates

This collaborative structure ensures institutional knowledge does not disappear when team members transition roles.

Integration with Development Workflows

Modern AI platforms integrate directly into application code via SDKs and API wrappers. Rather than changing developer workflows drastically, they layer observability on top of existing infrastructure.

Common integration features include:

- Automatic request logging through middleware

- Support for major LLM providers

- Webhook notifications for anomalies

- Export to analytics tools or data warehouses

By embedding logging into the development pipeline, AI interactions become measurable artifacts rather than transient chat exchanges.

Challenges and Considerations

Despite clear advantages, organizations should consider several factors before implementing an AI session management platform:

- Data privacy: Sensitive prompts must be encrypted and securely stored.

- Storage costs: Logging high volumes of interactions can become expensive.

- Vendor lock-in: Exportability of session data is critical.

- Scalability: The platform must support production-level traffic.

Evaluating compliance requirements and integration flexibility ensures long-term sustainability.

The Future of AI Observability

As LLM systems become more autonomous and agentic, session observability will extend beyond prompt-response logging into full decision tracking. Future platforms may incorporate:

- Real-time anomaly detection

- Automatic quality scoring

- Feedback loop integration

- Human-in-the-loop review systems

AI session management is likely to evolve into a foundational discipline similar to DevOps monitoring in cloud computing. Organizations that invest early in observability tools are better positioned to scale responsibly.

Conclusion

AI session management platforms like PromptLayer represent a critical layer in the maturing ecosystem of LLM-powered applications. By logging, organizing, and replaying interactions, they bring structure, accountability, and efficiency to AI development. In an era where prompt engineering directly influences business outcomes, systematic oversight is no longer optional. Instead, it is the cornerstone of building reliable, scalable, and compliant AI systems.

FAQ

-

What is PromptLayer used for?

PromptLayer is used to log, monitor, and replay large language model interactions. It helps teams track prompt versions, analyze responses, and debug AI workflows in production environments. -

Why is session replay important in AI systems?

Session replay allows teams to reproduce exact prompt-response sequences, enabling debugging, regression testing, and performance benchmarking across different models or prompt versions. -

How do session management platforms reduce AI costs?

They provide visibility into token usage, latency, and redundant API calls. This insight allows teams to optimize prompts, reduce unnecessary requests, and select cost-effective models. -

Are AI session logs secure?

Most platforms implement encryption and access controls, but organizations must evaluate security standards, compliance certifications, and data retention policies before adoption. -

Can small startups benefit from AI observability tools?

Yes. Even early-stage teams benefit from structured logging and version control, preventing technical debt as AI features scale. -

How are these platforms different from traditional logging tools?

Traditional logs capture technical API events, while AI session management platforms track semantic prompt data, model configurations, and conversational context tailored specifically for LLM workflows.